Your data architecture is only as good as its underlying principles. Without the right intent, standards, and universal language, it’s difficult to get your strategy off the ground.

So, before you use customer data to drive analytics operations, take a step back and consider whether you’ve laid the right foundations. Ultimately, following the right data architecture principles will help strengthen your data strategy and enable you to develop pipelines that accelerate time to value and improve data quality.

Download the article as a pdf

Share it with colleagues. Print it as a booklet. Read it on the plane.

The importance of a strong data architecture

The right data architecture is central to the success of your data strategy. It’s made up of all the policies, rules, and standards that govern and define the type of data you’re collecting, including:

- How you use your data

- Where you store your data

- How you manage your data and integrate it across your business

Perfecting this process is the key to any successful data strategy. As a result, if failure to implement data architecture best practices often leads to misalignment issues, such as a lack of cohesion between business and technical teams.

But how can your business make sure your data architecture strategy keeps up with modern business demands?

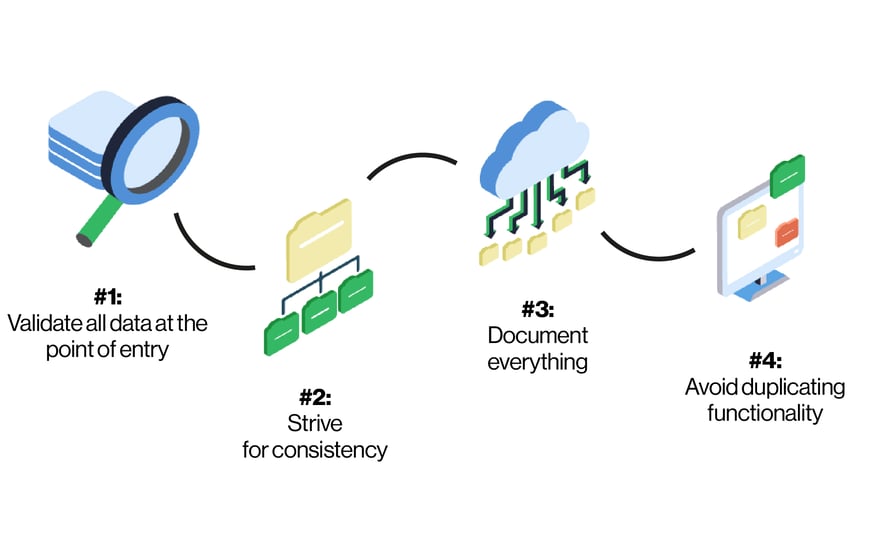

Four of the best data architecture principles you need to know

To gain full control over your data, you need to structure your data architecture in a clear and accessible way. To do so, you'll need to follow the best data architecture principles.

By definition, data architecture principles pertain to the set of rules that surround your data collection, usage, management and integration. Ultimately, these principles keep your data architecture consistent, clean and accountable and help to better your organization’s overall data strategy.

Here are the four data architecture best practices for you to follow.

1. Validate all data at the point of entry

Did you know that bad data quality has a direct impact on the bottom line of 88 percent of companies? To avoid common data errors and improve overall health, you need to design your architecture to flag and correct issues as soon as possible.

However, it’s tricky to spot errors when you have large datasets, complex manual processes, and little support. Fortunately, investing in a data integration platform that validates your data automatically at the point of entry will prevent future damage and stop bad data proliferating and spreading throughout your system.

What’s more, filtering out anomalies with an automated tool will help minimise the time it takes to cleanse and prep. With so much data collected every day, it’s vital you only keep the information that provides value, creating a sustainable data validation and error correction loop.

2. Strive for consistency

Using a common vocabulary for your data architecture will help to reduce confusion and dataset divergence, making it easier for developers and non-developers to collaborate on the same projects. This provides your team with a ‘single version of the truth’ and allows you to create data models that correctly define entity relationships and translate them into executable code.

Consistency is key here as it ensures everyone is working from the same core definitions. For example, you should always use the same columns names to enter customer data, regardless of the application or business function. The moment you stray from this common vocabulary is the moment you lose control of both your data architecture and data governance.

3. Document everything

Regular ‘data discoveries’ will allow your organization to check how much data it’s collecting, which datasets are aligned, and which applications need updating. To achieve this, you need transparency into each business function to compile a broad overview of your data usage.

But to gain complete visibility, your first need to get into the habit of documenting every part of your data process. This means standardizing your data across your organization. As we’ve already established, you need to strive for consistency in everything you do, which is impossible if no one in your company is taking the time to write things down.

This documentation should work seamlessly with your data integration process. One association management system provider developed their data architecture using just an Excel spreadsheet and a data integration platform, loading workflows from document to production and automating regular updates to their analytics warehouse. All they needed to do was maintain the Excel document.

4. Avoid duplicating functionality

When you’re working across more than one application, function or system, it’s tempting to simply copy data between them. But in the long run, this significantly increases the time your developers spend updating duplicated datasets and prevents them from adding value in other, more critical areas.

Instead, you need to invest in an effective data integration architecture that automatically keeps your data in a common repository and format. Not only does this makes it much simpler to universally update your data, it also prevents the formation of organizational silos, which often contain conflicting or even obsolete data. Now everyone can operate from a single version of the truth, without the need to update and verify every individual piece of information.

Success comes from sticking to your principles

According to Gartner, 85 percent of big data projects fail to get off the ground. But, to avoid becoming part of this unwanted statistic, you need to follow the right data architecture principles and build them into the very heart of your strategy and culture.

From validating your data at the point of entry to sharing a common vocabulary of key entities, ensuring you stick to these principles will accelerate your data strategy and give you the platform you need to meet modern customer demands faster and more efficiently.

By CloverDX

CloverDX is a comprehensive data integration platform that enables organizations to build robust, engineering-led, ETL pipelines, automate data workflows, and manage enterprise data operations.